How I Refactored a Credit Underwriting Workflow with Data, AI and Human-in-the-Loop Design

Underwriting is often described as a structured and rational discipline. In reality, it rarely feels that way. Over the years, working in fintech and supporting credit products, I kept seeing the same pattern. The logic behind credit decisions was not the bottleneck. The friction came from everything around it: scattered data, manual risk assessments, inconsistent documentation, and workflows held together by spreadsheets, email threads and other communication channels.

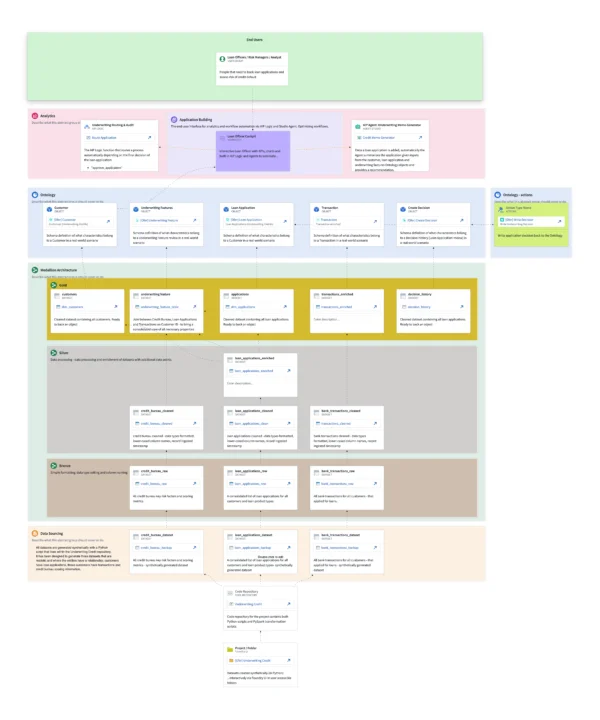

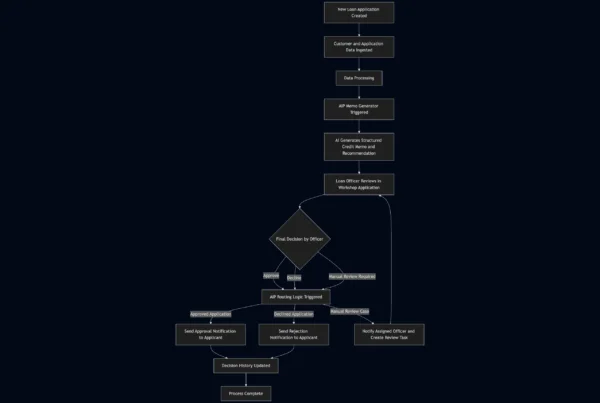

I wanted to explore whether this complexity could be refactored into a single, coherent system. Not just layering automations on top, but refactor the entire workflow into a process that mirrors how underwriting teams should operate while optimizing it with AI. To do that, I built the prototype inside Palantir Foundry, using its data engineering, modeling, and application capabilities as the foundation for the workflow. This article walks through that journey. I share how I rebuilt an underwriting workflow end to end, where AI fits responsibly and why restructuring the process turned out to matter more than any individual tool.

Understanding the Challenge

Underwriting sits at the intersection of structured and unstructured data, internal risk policies, human judgment and regulatory oversight. The difficulty is not only finding the ‘sweet spot’ of credit logic. It is the fragmentation of the process.

Customer information, transaction behavior, bureau scores, internal risk flags and decision rationales all live in separate systems and interfaces. No single tool brings them together in real time and no two analysts produce a memo in the same way.

In practice, this showed up in a few recurring ways:

- Data scattered across systems

- Inconsistent memo styles and documentation

- Manual reconciliation and slow review cycles

- Limited transparency into how decisions were made

Analysts spend a significant amount of time gathering and reconciling information instead of evaluating risk. The process also scales poorly because so much of it depends on manual effort and human bias.

I approached this as a process refactoring problem. The goal was not to automate isolated tasks, but to redesign the workflow so that data, decisions and documentation move through a structured system from the start.

Building the Data Foundation

To explore this idea, I started at the data layer.

I began by creating three synthetic datasets that resemble common underwriting data sources:

- Bank transactions

- Bureau records

- Loan applications

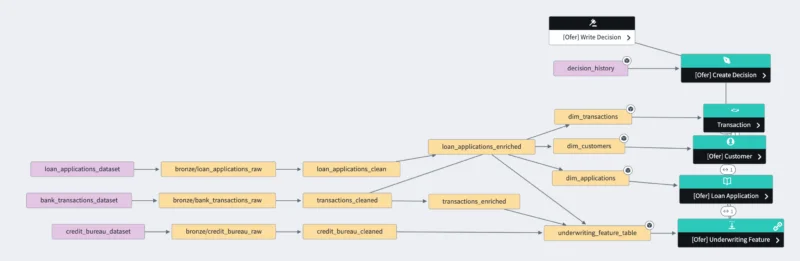

These datasets became the entry point into Foundry and into a Medallion-style architecture powered by PySpark and Foundry Transforms[^https://www.palantir.com/docs/foundry/transforms-python/transforms].

The Bronze Layer acts as the landing zone. It preserves raw structure, enforces schemas and maintains lineage back to the source.

The Silver Layer standardizes and cleans the data, applies type casting and enriches records with additional features and with metadata such as ingestion timestamps.

The Gold Layer is where the underwriting workflow starts to take shape.

In the Gold Layer, I engineered an underwriting features dataset. This dataset captures signals that analysts typically evaluate, such as credit utilization, delinquencies, spending patterns and indicators of financial capacity. These features later become key inputs for both the memo generator and the Loan Application Cockpit interface.

Modeling the Underwriting Domain

This was the point where the workflow started to feel less like data engineering and more like modeling the business process itself.

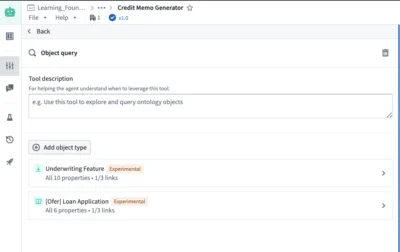

Within the Ontology I created five core entities:

- Customer

- Loan Application

- Underwriting Features

- Transactions

- Decision History

Each entity has typed attributes, defined relationships and associated actions. Instead of thinking in terms of tables and joins, the system now reflects real business concepts and provides users with context about how these entities interact and what those interactions represent.

The Decision History object plays a particularly important role. It acts as the system of record for every memo, AI recommendation, human override, routing action and final decision. This design choice turned the system into something that mirrors the audit expectations of regulated environments.

Modeling the domain in this way makes downstream components simpler to design. The AI assistants, routing logic and loan cockpit all rely on the same structured representation of the borrower and the loan.

The Role of the Workshop Application

Data pipelines and domain models provide structure, but the real operational impact becomes visible through the Workshop application. This is where credit officers or analysts interact with the system and where the refactored process becomes tangible.

Traditionally, analysts spend a large portion of their time assembling information before they can even start evaluating a loan. The Workshop application replaces that fragmented process with a single working interface.

The Loan Application view shows the details of the credit request.

The Customer 360 view presents a clear snapshot of the borrower and their financial behavior.

The Decision panel provides the context, AI generated memo and controls for the analyst to record the final decision.

This shifts the rhythm of underwriting from data gathering to interpretation and judgment. The system ensures every case starts from the same structured foundation.

AI Assistant 1: Automated Memo Generation

Credit memos are one of the most time-consuming parts of the underwriting process. They require summarizing financial behavior, interpreting bureau signals, highlighting risk factors and explaining the reasoning behind a decision.

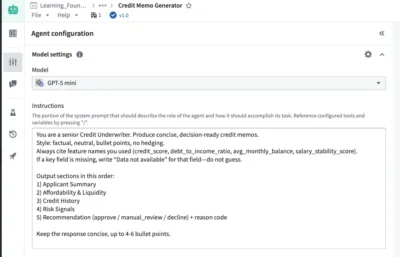

This is a natural use case for a Large Language Model when it is supplied with structured, well-defined inputs, clear guidance and expected outputs.

Using AIP Agent Studio, a curated system prompt and the attributes of the domain entities described earlier, the system automatically generates a memo as soon as a new application is detected by the system. The agent synthesizes the borrower’s signals into a structured narrative and proposes a recommendation.

A few things became clear very quickly. First, memos became standardized, eliminating stylistic variations created by analysts. Second, drafting time dropped significantly. What might take a human twenty to thirty minutes to create takes the system a fraction of that time, increasing throughput and reducing the time between loan application creation and final decision. Third, the workflow would free analysts to focus on finalizing the loan decision rather than writing up memos.

What surprised me was not just the speed, but how quickly a consistent narrative format reduced variability between cases.

AI Assistant 2: Decision Routing and Auditability

Once the memo is generated, the workflow pauses for human judgment. The analyst reviews the recommendation inside the Workshop application and records the final decision.

Routing begins only after a human reviewer confirms the final decision, keeping accountability clearly with the analyst.

Using AIP Logic, the system routes the outcome and triggers the appropriate next steps. Approved applications trigger a confirmation notification to the applicant, while declines generate a different communication. Cases requiring additional review are routed to the responsible officer.

At the same time, every action is written to the Decision History object. This includes the initial application record with the generated memo, the AI decision recommendation, the human decision, timestamps and any routing outcomes. The result is a continuous, traceable record of how each loan moved through the system.

Bringing the Workflow Together

When all components connect, underwriting becomes a continuous, structured workflow.

Data flows from external sources into the platform, moves through the Bronze, Silver and Gold layers, becomes structured as business entities, powers the analyst interface, triggers AI-generated narratives and passes through routing and audit mechanisms before outcomes are communicated.

There are no manually maintained spreadsheets, no disconnected scripts and no fragmented documentation. The workflow operates as a single, traceable process. This is where the value of refactoring became clear.

Instead of layering automation on top of a fragmented workflow, the entire process is redesigned in a structured way that reduces friction and creates a stable foundation for future improvements.

Key Design Principles

Building the system end to end brought a few lessons into focus.

Structure drives model reliability.

The memo generator worked best when the inputs were clearly defined, the task instructions were explicit and the output was constrained to a structured format. This definition restricted the model’s tendency to hallucinate, reduced its ability to invent information and resulted in a dependable component within the process.

Modeling the domain simplifies everything.

Once the underwriting process was expressed in terms of clear entities, relationships and actions, downstream components became easier to design and build. Memo generation, routing and audit logic all benefited from a shared, business aligned representation of the loan, the borrower and related data points.

AI complements deterministic systems.

Language models are well suited for narrative generation and synthesis, but deterministic logic still carries the responsibility for governance, consistency and explainability. Treating the AI assistant as one module within a broader system and confining it to do one specific task led to a more reliable workflow.

Auditability is a first-class requirement.

Automation alone is not enough in lending. Underwriters, risk teams and regulators expect visibility into how decisions are made. Capturing every step in a single, structured, tamper-proof place ensures the process remains traceable and defensible.

Data governance must be built into the architecture.

The same structured foundation that enables analytics and AI also enables fine-grained access control. Sensitive data such as PII can be restricted at the object, row, column or application level. Analysts only see what they are authorized to access and LLM services can be restricted from accessing or receiving PII. This separation provides an additional layer of security and helps ensure that AI is used responsibly within regulatory and privacy boundaries.

Why Refactoring the Process Matters

Refactoring the underwriting workflow was not about making it look cleaner on paper. It was about changing how decisions actually move through the system.

In the original setup, many activities were bundled together. Analysts gathered data, interpreted signals, wrote memos, communicated outcomes and documented reasoning all within the same manual steps. Because everything happened at once, it was difficult to improve any single part of the process. There was no clear boundary between what was structured, what relied on judgment and what could safely be automated.

By restructuring the workflow into distinct layers, those boundaries became visible. The data pipelines handled ingestion and preparation. The Ontology defined the business entities, their relationships and applicable actions to better reflect real the business operations. The Workshop application became the operational interface. The AI assistants were confined to specific tasks like memo drafting and routing support. That separation changed what was possible.

Instead of analysts manually collecting data from multiple systems, the features they needed were already engineered and presented in context. Instead of each memo being written from scratch, AI produced a structured draft tied directly to the same underlying signals every time. Instead of decision rationales living in email threads, they became part of a persistent Decision History linked to the application itself.

Refactoring did not replace underwriting judgment. It removed the surrounding friction so that judgment could be applied more consistently. It also made it clear where AI added value and where it should not be involved. Narrative generation and summarization fit well. Final decisions, overrides and accountability remained with humans.

Without that structural refactoring, adding AI would simply have automated isolated steps inside a fragmented process. With the new structure in place, AI became one component in a coherent system. The result was not just faster memo creation or automated routing, but a workflow where data, reasoning and outcomes were connected end-to-end and remained traceable throughout.

That is the practical value of refactoring the workflow into a structured environment. It creates an architecture where automation reduces manual effort, consistency improves across cases and governance becomes easier because every step is anchored to structured data and explicit actions.

Closing Reflections

What this project reinforced for me is that AI does not fix broken processes. It amplifies whatever structure already exists. When a workflow is fragmented and opaque, adding AI only makes that complexity harder to control. When the process is clearly structured, AI becomes a focused tool that supports human work rather than obscuring it.

In underwriting, this distinction is critical. Decisions carry financial risk, affect people directly, and must stand up to regulatory scrutiny. Any AI involved has to operate within clear boundaries, with human oversight and full traceability. That only becomes possible when the workflow itself is designed with those principles in mind.

The most valuable outcome of this journey was not the memo automation or routing logic. It was seeing how much clarity emerges when a complex process is broken into understandable, well-defined components. Once those pieces are in place, AI has a stable foundation where it adds value in a controlled, responsible, and meaningful way.

That mindset extends beyond credit. Many operational processes suffer from the same issues: fragmented data, manual coordination, inconsistent documentation, and limited visibility. Refactoring these workflows into structured systems creates the conditions where responsible AI can deliver real impact.

That is the kind of problem I find most interesting to work on: taking complex, manual processes and reshaping them into transparent systems that are ready for thoughtful automation.